What is the difference between “calculate” and “compute”?

Light & flexible

I assure you, we are not going to discuss such quintessential terms related to the computing world, which might bore some of us, as it might have given the impression 😃

But this is something out of curiosity about the crux of what we are going to go through.

So, the calculation involves an arithmetic process. Computation is involved in the implementation of non-arithmetic steps of the algorithm, which actually brings things up to the calculation.

You got the idea where I am going with this, right? We can try to visualize every aspect of data processing stages from data collection, cleansing, processing, and then transforming it through mathematical operations to map data into something which makes more sense, i.e. “Insight.”But the intelligence used for such meaningful transformation was the human intervention that now can be “Artificial” as per the new digital trend.

Getting to know …

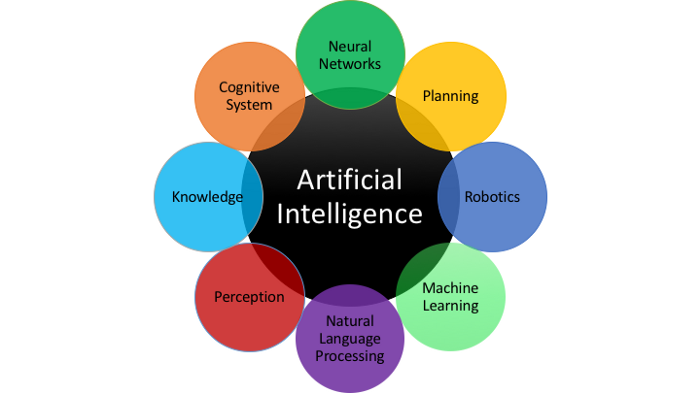

Artificial Intelligence in the industry will change everything about the way we produce, manufacture and deliver. Cognitive computing, machine learning, natural language processing — different aspects have emerged as the development of the technology has progressed in recent years. But they all encapsulated the idea that machines could one day be taught to learn how to adapt by themselves, rather than having to be spoon-fed every instruction for every eventuality. We can track certain important emerging digital trends considering the technology & future that are together converging very fast. Years ago, the industrial revolution immutably remolded society, and another revolution is underway with potentially even further reaching consequences. These digital trends are all potentially disruptive unless we plan ahead for the coming impact and change. Likely things benefited will be more agility, smarter business processes, and better productivity by converging focus and efforts on right things.

Goals of Artificial Intelligence

Goals of Artificial Intelligence

Artificial intelligence (AI) has become ubiquitous in business in every industry where Machines’ brains fundamentally transform decision-making. The need for faster and smarter decisions and the management of big data that can make the difference is what is driving this trend. The convergence of big data with AI is inevitable as the automation of smarter decision-making is the next evolution of big data. while adapting to this change some will inevitably prosper and some will fail. Those who manage to succeed are likely to be those who can see beyond the hype and understand how this technology can add real value and drive positive change.

The best way to look at AI is automating things which have been worked on and implemented logically to solve the problem already.This will help apply the existing problem-solving logic effectively, smartly using artificial brains and the human brain, efforts to focus on problems that still need more attention. The world where machines and devices all communicate with each other to get the work done (IoT), leaving us free to relax and enjoy life can be imagined through effective use of AI.

Let’s wonder around this digital trend and explore how can it be integrated for a better life 😊

#bigdata is becoming the norm for many organizations, using it to profile people, analyze their behavioral patterns and inform their decision-making processes, whether that’s to determine a basic day-to-day life process strategic business decisions.

#ArtificialIntelligence or #AI is stepping out of the world of science-fiction and into real life, providing the ‘thinking’ ability behind virtual personal assistants, automated robots, and smart cars.

#MachineLearning algorithms are providing intelligence for discovering patterns in the huge amount of data that traditional data analysis couldn’t hope to find, helping to detect fraud and diagnose diseases.

I have tried to interact with Google Home, an AI application developed by Google, and asked some questions to know information related to this trend. Following is the conversation:

Me: What is Data Analytics?

Google Home: Data Analytics refers to qualitative and quantitative techniques and processes used to enhance productivity and business gain. Data is extracted and categorized to identify and analyze behavioral data and patterns, and techniques vary according to organizational requirements.

“Ok Google”

“Ok Google”

Me: What is Machine Learning?

Google Home: Machine learning is a type of artificial intelligence (AI) that provides computers with the ability to learn without being explicitly programmed. Machine learning focuses on the development of computer programs that can change when exposed to new data. … Both systems search through data to look for patterns.

Me: What is Artificial Intelligence?

Google Home: the theory and development of computer systems able to perform tasks normally requiring human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.

Well, that’s cool.

Artificial Intelligence — often classified into one of two fundamental groups — applied and general.

Applied AI is more common — systems designed to trade stocks and shares intelligently, or maneuver an autonomous vehicle would fall into this category.

Generalized AI — systems or devices which can, in theory, handle any task — are less common, but this is where some of the most exciting advancements are happening today. It is also the area that has led to the development of Machine Learning. Often referred to as a subset of AI, it’s really more accurate to think of it as the current state-of-the-art.

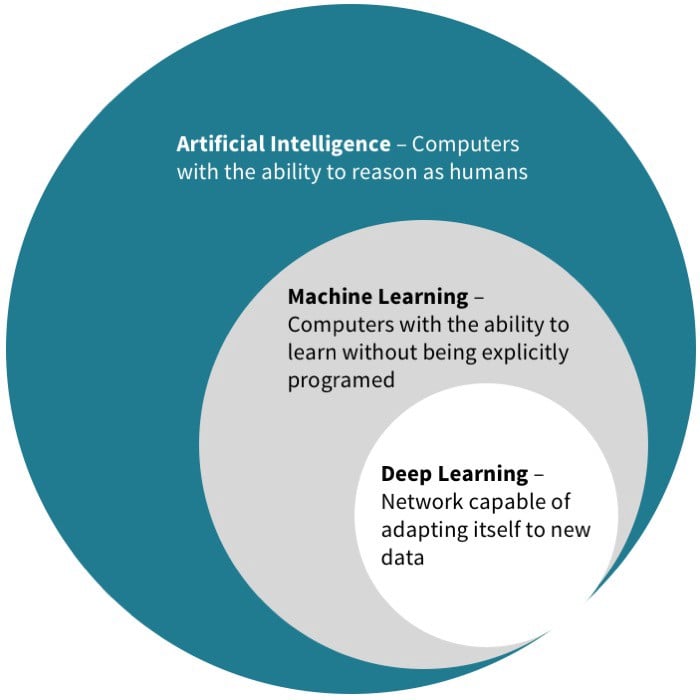

The relation between Artificial Intelligence and Machine Learning:

Artificial Intelligence, Human Intelligence exhibited by Machines, is the broader concept of machines performing tasks that imitate human intelligence, i.e., artificial intelligence.

Machine Learning, out of many other goals/approaches of AI, an approach to achieve Artificial Intelligence, is an application of AI revolving around the idea that let machines learn for themselves given access to information.

Deep Learning has enabled many practical applications of Machine Learning and in turn the overall field of AI. It breaks down tasks in ways that make all kinds of machine obliges seem possible, even likely.

Concept evolution!

Concept evolution!

As technology and understanding of human minds have progressed, our AI concept has changed. Rather than progressively complex calculations, work in the field of AI concentrated on imitating human decision-making processes and carrying out tasks in even more hominid ways. Since innovations have been in place, engineers realized that rather than training computers and machines, it would be far more efficient to code them to think and learn the human brain and provide the internet as a learning platform to give them access to all information in the world.

To make computers think and understand the world in the way we do, while retaining the innate advantages they hold over us such as speed, accuracy, and lack of bias - Neural networks played the key role.

Going a step ahead to avoid this complexity of learning concepts of AI and the algorithmic journey of ML, to provide with a platform to develop an AI application with simple logistics and freeing developers to focus on AI problem statements to solve is the next advancement.

Happy to see some leaders in the industry are taking an interest in it and making complex technologies such as AI and ML available as a simple platform to create such voice/text assistants to address this perspective of data science.

Google api.ai

Amazon Alexa

Facebook wit.ai

And many in the market. Such initiatives will be always appreciated.

About Google API.AI — Understand Google api.ai and build AI Assistant

Looking at the other side of this …

There are concerns that this technology will lead to widespread unemployment, which is beyond the scope of this discussion, but it does touch on the point we should consider. Employees are often a business’s biggest expense, but does that mean it’s sensible to think of AI as primarily a means of cutting HR costs?

I don’t think so.

Think about it!

Think about it!

The fully autonomous, AI-powered, human-free industrial operation seems to be away from becoming a reality. Human employees working alongside AI machines are likely to be the way of things. How can an intelligence developed by humans REPLACE a human? Surely it can replace repetitive mechanizable efforts of a human at some places where artificial intelligence can work. So if you’re looking to generate value in the near future, then thinking about ways to empower humans with technology rather than replace them is likely to be more productive. In doing these things, we can free people to put all of our creativity, passion, and imagination into thinking about the bigger opportunities ahead of us.

Trends are only disruptive if we are unprepared to factor them into our strategy. How trends impact our workforce, customers, market, services, and our lives should be carefully pondered. And perhaps most importantly, a business needs a clear use case and a genuine perception of how and why they can gain value from it. With anything new and exuberant in business, there’s often a race to be involved, driven primarily by a fear of being left behind. Scrambling into automating and smartening an enterprise without having a clear outlook of what you hope to achieve is a misdirection to intelligence.

As said by Mark Zukerberg, “A frustration I have is that a lot of people increasingly seem to equate an advertising business model with somehow being out of alignment with your customers, … I think it’s the most ridiculous concept. What, you think because you’re paying Apple that you’re somehow in alignment with them? If you were in alignment with them, then they’d make their products a lot cheaper!”

Another frustration we should feel is … we increasingly seem to diverge efforts put in various technology trends being out of alignment with their use and impact on our life. I think it’s an even more ridiculous concept. To be productive, efforts need to be meticulous and put in the proper direction, and AI can help find this direction quickly and easily. If we aligned with the constructive use and right influence of technology trends, it’d make our lives easier and happier!

Let’s embrace the change and explore integrity!

Let’s embrace the change and explore integrity!

Image credits: Google

References:-

- Difference Between Artificial Intelligence, Machine Learning, and Deep Learning?

- https://en.wikiquote.org- https://en.wikiquote.org

- Difference between Artificial Intelligence and Machine Learning