This story will add more light on Apache NiFi and how it can be used with Hortonworks distribution.

Here you will understand what is NiFi, why it is preferred over other tools available in the market, architecture and how to integrate it with HDP cluster and with hands on examples video.

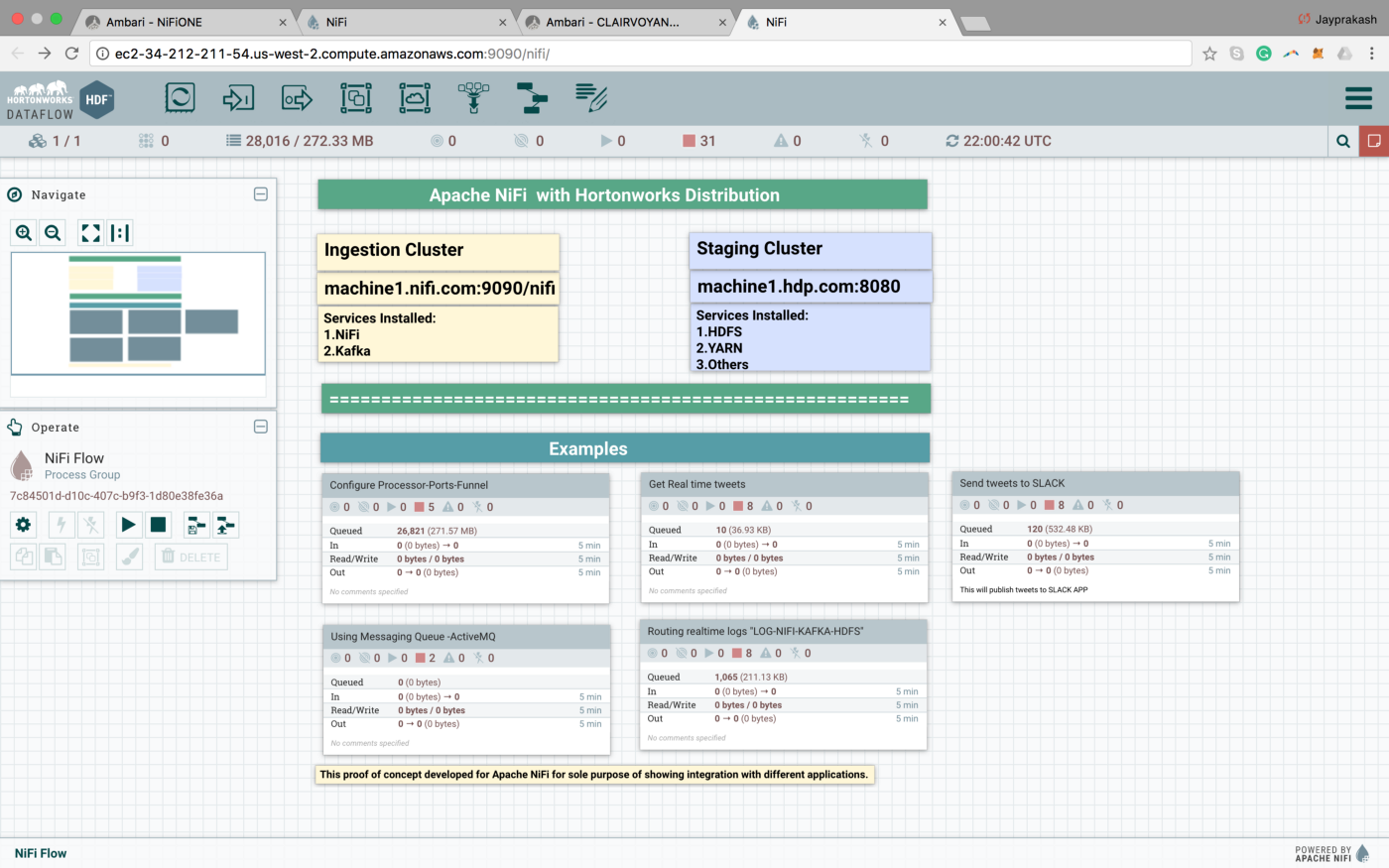

Image:Hands on of Apache NiFi with Hortonworks distribution

Image:Hands on of Apache NiFi with Hortonworks distribution

Apache NiFi supports powerful and scalable directed graphs of data routing, transformation, and system mediation logic. — https://nifi.apache.org/

Alternatively, we can call it an easy-to-use, powerful, and reliable system to process and distribute data.

There are many tools available from the open source community, but NiFi topples it in managing streaming data in real-time with control on data inputs, outputs, transportation, and transformations that too with ease.

Sensing this opportunity and contributing to it, Hortonworks created a wonderful platform, “Hortonworks Data Flow,” which integrates Apache Nifi/MiNifi, Apache Kafka, Apache Storm, and Druid.

An integrated platform to collect, conduct and curate real-time data moving it from any source to destination. — https://hortonworks.com/products/data-platforms/hdf/

So at this point of story, we have HDF with integrated NiFi.

Why is it better than other tools?

-

Dealing with Asynchronous data ( jagged/ragged edge nodes)

-

Provides security, privacy and data protection at jagged edge nodes.

-

Data provenance in a regulated environment.

-

Real-time Bi-directional data flow over traditional one-way streaming.

-

Data Lineage built-in at a fine-grained level.

-

A good fit in the IoT space.

-

The best thing is it will always be “Open Source”.

Apache NiFi service runs on port 9090 and can be customized. Apache NiFi can also be run in cluster mode.

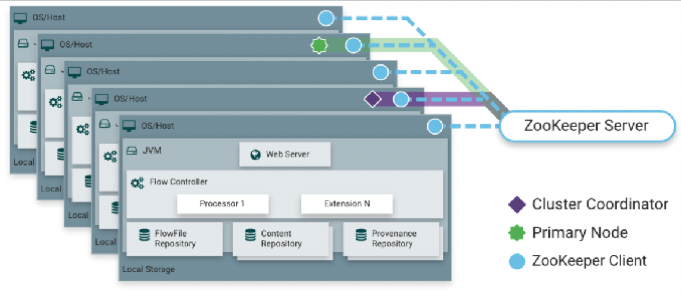

Architecture

NiFi executes within a JVM on a host operating system. It has a web server to host NiFi’s HTTP-based command and control API.

A Flow controller which provides threads for extensions to run on and manages the schedule of when extensions receive resources to execute.

And different repositories to manage the states of data, metadata for NiFi.

1. FlowFile Repository

The FlowFile Repository is where NiFi keeps track of the state of what it knows about a given FlowFile that is presently active in the flow.

2. Content Repository

The Content Repository is where the actual content bytes of a given FlowFile live.

3. Provenance Repository

The Provenance Repository is where all provenance event data is stored.

4.NiFi works in Distributed /Cluster mode

NiFi can also operate in the cluster using a zookeeper, which elects one of the cluster coordinator nodes. The Cluster Coordinator is responsible for disconnecting and connecting nodes.

Apache NiFi @ HDP — A hands-on tutorials

Let's look at some examples with a series of videos with a total time of fewer than 20 mins. ;)

Learning step 1: Understanding HDF and HDP cluster hands on architecture

Learning step 2: Configure processor, funnel and input port in NiFi.

Learning step 3: Get real-time tweets and store into HDFS using NiFi.

This example includes how to get real-time data from Twitter and dump it into HDP cluster from HDF cluster.It also shows how to integrate HDP with HDF to utilize HDFS storage.

Get real-time tweets and store into HDFS using NiFi.

Learning step 4: Route real-time logs or data ingestion from KAFKA to HDFS using NiFi

Route real-time logs or data ingestion from KAFKA to HDFS using NiFi

Learning step 5: Send tweets to 3rd party applications like SLACK from NiFi

Slack Integration with Apache NiFi

Learn how to install Apache Nifi on Cloudera’s Quickstart VM in our blog here. To get the best data engineering solutions for your business, reach out to us at Clairvoyant.